MCUs Are Starting to Embrace AI — Arm at Embedded World 2026

Arm just showed the future of embedded AI at Embedded World 2026. Here's what actually happened — explained by a 15-year embedded software engineer.

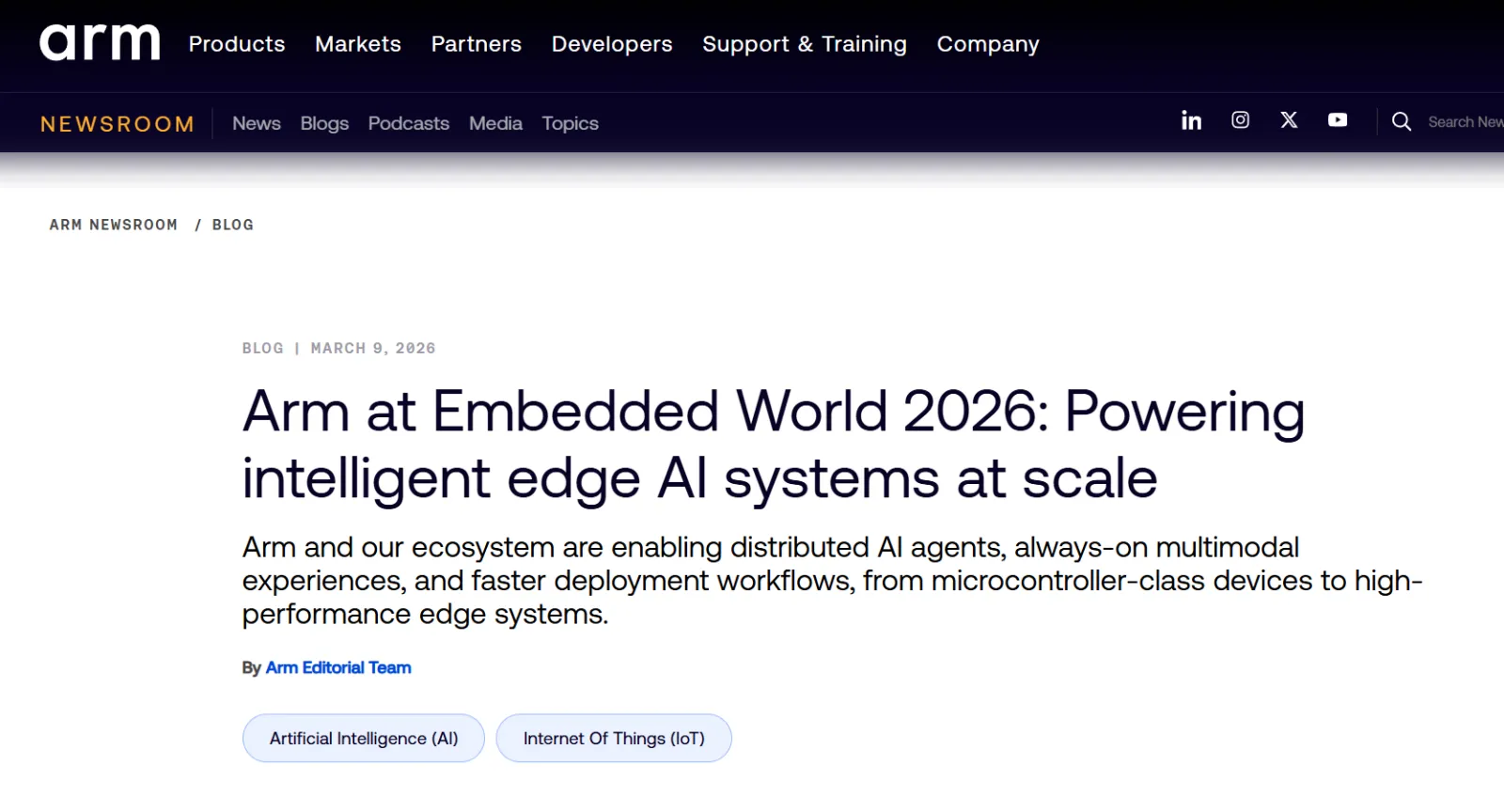

A factory floor. Cameras on the line. Robotic arms. Industrial controllers.

Until now, they all worked in isolation. The camera captures footage and sends it to the cloud. The cloud makes the decision. The robotic arm receives the command and executes it.

This week, a signal came that this structure is about to change.

Who Is Arm?

Before diving in — if you're not from the embedded world, here's the one-liner.

The smartphone in your hand, the MCU on the factory floor, the ECU in your car — they all run on Arm architecture.

Samsung, Apple, Qualcomm, TI, Infineon. Over 90% of the chips these companies make are Arm-based. Arm is the de facto standard in semiconductor design.

Their official announcement channel is Arm Newsroom (newsroom.arm.com). Not a media article — this comes directly from Arm. High credibility, straight from the source.

What Is Embedded World?

Think of it as the CES of the embedded industry.

The world's largest embedded systems trade show, held every year in Nuremberg, Germany. TI, Infineon, NXP, Arm — every major semiconductor company shows up to announce where the technology is heading that year.

This year, Embedded World 2026 ran March 10–12. The dominant keyword across the entire show floor?

Edge AI.

Arm's Core Message: "AI Moves Into the MCU"

Arm showed four demos at Embedded World. All four point in the same direction.

"Stop asking the cloud. Let the MCU decide on the spot."

Let me break each one down in plain terms.

Demo 1 — Devices That Talk to Each Other

Cameras, robotic arms, and industrial controllers discovering each other and coordinating in real time — without a central server.

Here's how it used to work:

Camera → Cloud → Decision → Robotic arm executes

Here's how it works now:

Camera MCU: "Defect detected!"

Robotic arm MCU: "Stopping the line now."

Controller MCU: "Reducing conveyor speed."

No cloud round-trip. Millisecond response. On the factory floor.

It works even when the internet goes down. And it responds dramatically faster because there's no network latency in the loop.

Demo 2 — "Hey Siri" Without the Cloud

Voice recognition on smart speakers today? All of it goes to the cloud.

That's a problem for factory equipment and automotive components. Tight power budgets. Privacy concerns. No guaranteed connectivity.

Arm showed this running entirely inside the MCU — powered by NPU acceleration.

What's an NPU? It's a chip designed exclusively for AI computation. If the CPU is a generalist who handles everything, the NPU is a specialist who does nothing but AI math — and does it insanely fast at a fraction of the power.

Result: always-on voice capability without a cloud module, within existing power and cost constraints.

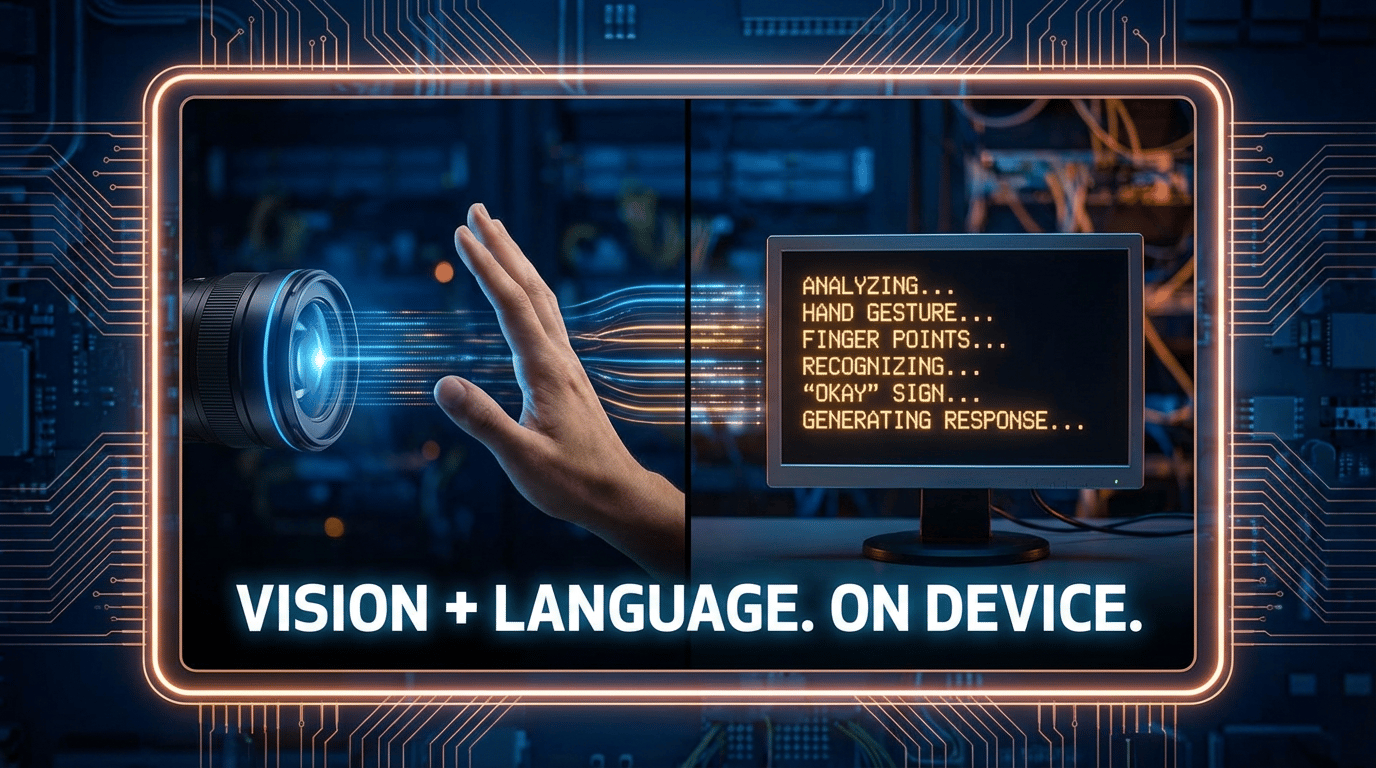

Demo 3 — Camera + LLM Inside One MCU

"Multimodal" sounds complicated. It's not.

It just means eyes (camera) + language (LLM) working together.

Camera captures an object → MCU identifies what it is → MCU generates a text response. Everything processed locally. Nothing leaves the device.

Medical example: a camera monitors a surgical site and alerts the surgeon — "Bleeding detected, watch blood pressure" — via on-device voice output. That footage can't go to an external server. Patient privacy laws won't allow it. On-device processing solves that.

Same goes for automotive. A driver-facing camera detecting drowsiness shouldn't be streaming your face to a remote server. It shouldn't have to.

Demo 4 — Deploying AI Models Gets Less Painful

This is the one that hits closest to home for embedded engineers.

You train an AI model in Python. Now you need to get it onto an MCU.

Model conversion → Optimization → Driver integration

→ Memory fitting → Debugging → More optimization...

In reality, this process takes weeks. No matter how good the model is, the deployment step alone can blow up an entire project schedule.

Arm showed a streamlined pipeline using Keil MDK v6 + ExecuTorch + Zephyr. Think of it like this: you wrote a recipe in Python, and now there's an automatic translator that adapts it to fit your tiny MCU kitchen — without you manually rewriting every step.

Shorter integration cycles. Faster time to market. Less engineering pain.

A 15-Year Embedded Engineer's Honest Take

Good announcements. The direction is right.

But from the trenches, a few realistic questions come to mind.

"Will MCU memory hold up?" Running LLMs on MCUs means very small, constrained models. Getting to production-viable quality for mass market devices — that still needs time.

"Will the toolchain actually be smooth?" The automation in Demo 4 is a step in the right direction. But how stable it is in real production environments is a different question entirely.

"What about automotive?" Automotive has AUTOSAR, ISO 26262, functional safety requirements. New technology adoption is painfully slow by design. Edge AI making it into mass production vehicles still has mountains to climb.

That said — the direction Arm showed at Embedded World 2026 is clear. And it's the right one.

What Embedded Developers Should Start Doing Now

-

Understand NPU basics — Know which operations can be offloaded to an NPU and why

-

Get hands-on with Edge AI toolchains — TensorFlow Lite, ExecuTorch, on-device deployment workflows

-

Learn on-device model optimization — Quantization, pruning, model compression

AI isn't just a cloud story anymore. If you write embedded software, AI is coming to your domain. Better to be ready.

Wrap Up

Read Arm's full official announcement here: 👉 Arm Newsroom — Embedded World 2026

I'm an embedded software developer at an automotive company, shipping AI-powered web services on the side with zero prior web experience. Follow the build at aicraftlog.com.

Related Posts

Stay Updated

Get notified when I publish new posts. No spam, unsubscribe anytime.